Vercel’s supply-chain breach: one Roblox script away from your secrets

A stolen Roblox auto-farm script led to Context.ai credentials, then Vercel Google Workspace takeover via OAuth token abuse in March 2026.

In February, a Context.ai employee went hunting for Roblox auto-farm scripts on their work laptop. One of the downloads carried Lumma, a commodity infostealer that circulates on criminal marketplaces and has been reliably delivered through Roblox and other gaming cheats for years. The infected machine promptly exported every credential in the browser to a criminal buyer: Google Workspace logins, Supabase keys, Datadog tokens, Authkit credentials, and access to Context.ai's own support inbox.

Ten weeks later, that single infection had walked through an OAuth token into Vercel's internal environments, allowed whoever held the keys to read the environment variables of a subset of Vercel customers, and ended with a post on BreachForums demanding $2 million in Bitcoin.

Nothing about the attack was novel. None of it required real sophistication.

This is a supply-chain compromise worth understanding in detail, because the shape of it will recur. Nothing about the attack was novel. None of it required real sophistication. It ran on commodity malware, an opt-in security flag that nobody had bothered to toggle, and the quiet accumulation of OAuth trust that has become the default posture of every developer-adjacent SaaS stack in 2026.

flowchart TD

A["Roblox auto-farm download

delivers Lumma stealer

February 2026"]

B["Context.ai employee's browser

credentials exfiltrated"]

C["Context.ai OAuth tokens

stolen from token store

March 2026"]

D["Attacker uses stolen token

for Vercel employee's

Allow All grant"]

E["Vercel Google Workspace

account taken over"]

F["Lateral movement into

Vercel internal systems"]

G["Non-sensitive environment variables

of Vercel customers enumerated"]

A --> B --> C --> D --> E --> F --> G

What Vercel has confirmed

Vercel disclosed the breach on 19 April and updated its bulletin the following day. The company identified a limited subset of customers whose credentials were compromised, contacted them directly, and recommended immediate rotation. Services remained operational throughout. Google Mandiant is engaged, alongside other firms and law enforcement.

The initial access vector was, in Vercel's words, "a compromise of Context.ai, a third-party AI tool used by a Vercel employee". That employee had signed up to Context.ai's consumer-facing AI Office Suite, a product distinct from Context.ai's enterprise offering, using their Vercel-owned Google Workspace account, and granted it "Allow All" permissions. When the attackers obtained Context.ai's OAuth tokens, they inherited those permissions. From there, they took over the employee's Workspace account, moved laterally into Vercel's internal systems, and began reading the environment variables of customers who had not flagged them as sensitive.

Vercel's chief executive Guillermo Rauch filled in the rest on X. Every customer environment variable is encrypted at rest, but Vercel offers an additional "sensitive" designation that keeps the decrypted value inaccessible to anything other than the build and runtime environments. Variables without the flag remained visible to Vercel's internal tooling, and so, once the attackers were inside, to them.

Default-safe would have meant every value was treated as sensitive until proven otherwise. Default opt-in meant most teams never ticked the box.

That is the core technical failure, and it is a design choice rather than an accident. Default-safe would have meant every value was treated as sensitive until proven otherwise. Default opt-in meant most teams never ticked the box. Vercel has since shipped interface improvements to make the flag more visible, but the damage to customer credentials already sits in someone else's database.

On 21 April Vercel went further, confirming in collaboration with GitHub, Microsoft, npmjs and SocketSecurity that none of the npm packages it publishes (including Next.js) had been tampered with. The broader supply chain, they state, remains clean.

Context.ai's weekend

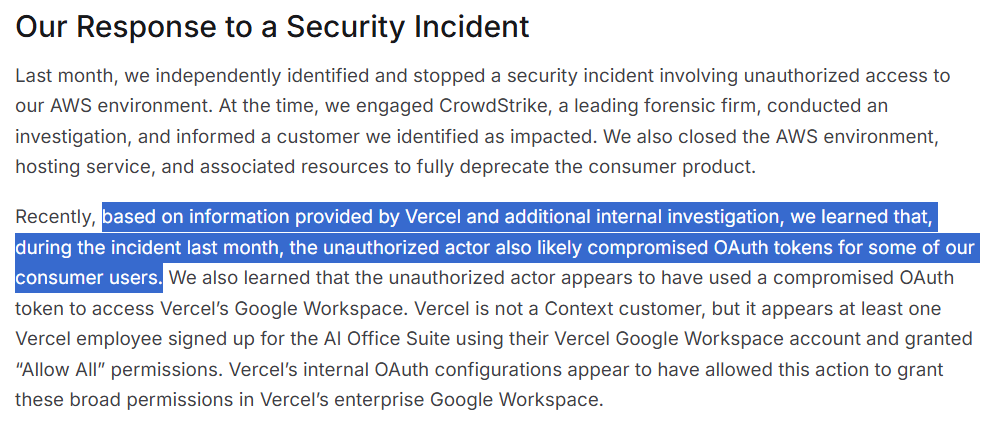

Context.ai's security update, published on 19 April (and updated since), reads slightly less well on a second pass. The company says it "independently identified and stopped" a security incident in March involving unauthorised access to its AWS environment. It engaged CrowdStrike to investigate, notified one customer it believed to be impacted, and closed the relevant AWS resources, deprecating the consumer AI Office Suite in the process. That was the account from March.

"Few things are more embarrassing for any company than to only learn from your customer that you have been breached." – Gergely Orosz

The problem with that account is what Vercel's disclosure forced Context.ai to admit on 19 April: the March incident had also exposed OAuth tokens belonging to some of its consumer users, and Context.ai only learnt this when Vercel told them. The emphasis in their bulletin is on Vercel's "internal OAuth configurations" having allowed the broad Allow All grant in the first place, which is technically fair and rhetorically pointed. Context.ai is a company whose product generates permission requests for a living, and one of the organisations it asked for Allow All permissions was the one that ended up explaining to Context.ai how badly they had been breached.

Gergely Orosz put the broader point plainly: every SaaS tool a team uses is a security risk of its own, and especially so when that tool needs broad data access to email, internal documents, calendars, and whatever else an AI agent claims it needs to function. The awkwardness for Context.ai is not that they were breached; but that their own forensic investigator missed the OAuth token theft entirely. And a customer's security team had to complete the picture a month later.

None of this, in fairness, is grounds to single out Context.ai. The category is the issue. AI productivity tools that live on "Allow All" OAuth grants against corporate Google Workspaces are a structural hazard, and almost every organisation has several.

The "accelerated by AI" line

Rauch's more colourful claim is that the attackers were "significantly accelerated by AI", a piece of framing he originally hedged and which most outlets have since repeated as established fact. I am unconvinced. What happened at Vercel needed commodity malware, a compromised token, and patience. Enumerating environment variables and pivoting through a familiar SaaS stack is tedious, not fast. The attackers may well have used a model to write glue code, as everyone else does in 2026, but the choice of verb matters. Attackers accelerated by AI, and attackers who are motivated and lightly tooled, look identical from the blue side. The framing moves attention away from the unexciting root cause: an unreviewed OAuth grant on a corporate Workspace account allowed an infection at a small vendor to reach the inside of a platform worth billions.

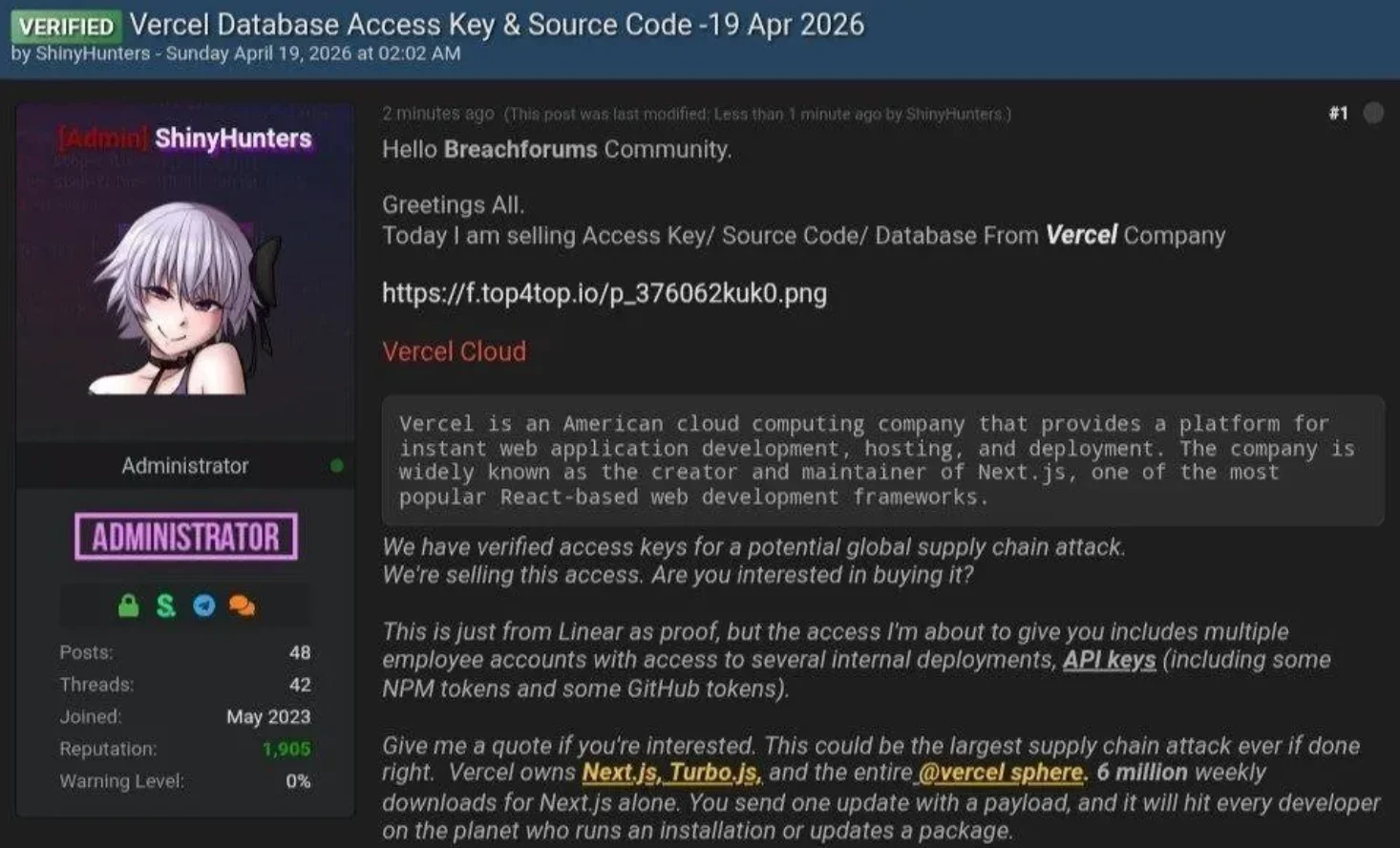

The ShinyHunters post on BreachForums belongs in the same bucket of noise. BleepingComputer reporting has actors linked to recent genuine ShinyHunters incidents denying involvement, and the original ShinyHunters operation has been the subject of enough arrests over the last eighteen months that the brand is largely a flag of convenience for impersonators. There may well be stolen data for sale. The attribution claim should not be believed.

What to do if you deploy on Vercel

If Vercel has not contacted you, your credentials are not known to have been exposed. Treat the following as prudent hygiene anyway, because the attack pattern is generic and the next vendor in the chain will not be Context.ai.

Start with environment variables. Open your Vercel team, go to Settings, and review every variable on every project. Any value that is a secret and is not flagged sensitive should be treated as exposed. Rotate them before you do anything else — including deleting projects or accounts. Vercel has now explicitly warned that deletion does not revoke or purge compromised secrets; the old values can still be used by whoever has them.

Infrastructure credentials first, then third-party keys, then observability tokens, then JWT/session secrets. For each rotation, generate the new value upstream, update it in Vercel with the sensitive flag set, redeploy without the build cache, verify it works, and only then revoke the old value. New environment variables now default to sensitive, which is the single biggest product improvement to come out of this incident.

Then work through the rest of the bulletin's recommendations. Your activity log is available on every plan; Audit Log events are Enterprise-only but worth pulling if you have access. Look for unexpected deployments, new team members, or integrations added since the beginning of April. Set Deployment Protection to Standard at a minimum, and rotate your protection-bypass tokens if you use them. If you run the Vercel-GitHub integration, review its repository scope and reinstall it with a narrower permission set if anything looks unfamiliar.

While you are there, enable multi-factor authentication on every account that does not already have it. Vercel now recommends at least two authentication methods.

If you run a Google Workspace

Vercel has published one indicator of compromise: an OAuth client ID, 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com. Google has already suspended the app itself, but the grant can still be present in your tenant and is still worth cleaning up.

In the Google Admin Console, go to Security, then Access and data control, then API controls, and open the list of Accessed apps. Filter by ID and paste the full client string. If nothing comes up, no user in your tenant consented to the app. If a row appears, open it, set the access to Blocked, and pull the list of users who authorised it. For each of them, review the OAuth log under Reporting, then Audit and investigation, then OAuth log events for the period since the start of March. Any Gmail, Drive, or Calendar scope usage tied to that client ID should be treated as potential data exposure for that user. Reset their passwords, sign them out of all sessions, and check their Drive sharing, Gmail forwarding rules, and filter settings for anything unexpected.

Individual Google users without Workspace admin access can do the same check at myaccount.google.com/permissions. The interface does not surface numeric client IDs, so search by name for anything labelled "Context", "Context.ai", or "Context AI Office Suite" and revoke on sight.

What this was really about

Nothing about this incident required a novel exploit or a nation-state budget. It needed an infostealer from a criminal marketplace, a consumer AI tool with a liberal OAuth scope, and a target with an opt-in security feature that was not, in practice, opted in to. Every organisation I know of has the ingredients on hand right now.

Two changes will pay for themselves. The first is treating OAuth grants against corporate Google Workspaces the way a competent IT function already treats VPN access: inventoried, reviewed, and removed when they stop earning their keep. The second is assuming that any secret not stored behind a named, default-on protection is readable, and rotating accordingly. Neither requires a budget line. Both ought to be habits by now. The Vercel-Context.ai chain is a reminder of what the cost looks like when they are not.